Symfony 4 made a deliberate choice to create new features to support microservices. Symfony Flex and Recipes make it easy to create a project that holds the minimal of dependencies, for example, the Twig (layout) component is not loaded when creating a minimal API application:

1 2 | composer create-project symfony/skeleton my-project composer req api |

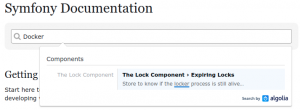

This makes Symfony 4 a good choice for setting up our microservices especially in combination with the reduced complexity of bundle-less applications and sensible default configuration. Therefore, I was surprised to find no single mention of Docker in the official Symfony documentation:

For local development, Symfony recommends to use the built-in web server of PHP and they created a Symfony wrapper around it: symfony req server --dev. When the application has to be deployed to production you should not use this server but will likely use NGINX or Apache. Combine the setting up of your own web server with the deployment steps and you have quite a complex task to deploy your application. The complexity gap between creating a local application and shipping it to production is higher than it should be. Dockerizing your application is the sensible option if you are going for a microservice architecture.

Composer dependencies can differ between environments

When updating your dependencies Composer looks at the current application versions installed on your machine. Potentially, this can give errors when your local development infrastructure stack differs from the production stack (e.g. a different PHP version). Most of the time the dependencies will be compatible with slightly different versions but when problems occur in production they likely are difficult to reproduce (best case scenario you will get notified when doing a composer install). Therefore, the Symfony documentation notes:

Don’t forget that deploying your application also involves updating any dependency (typically via Composer)…”

Using the same application versions in your local environment as in production migrates much of this risk. So how can we do this with Docker?

Docker images and containers

The most important distinction in the Docker world is between images and containers. A container is created from an image. The images are build using Dockerfiles, pushed to a Docker repository, and can be deployed as containers by different methods: docker run, docker-compose run, and docker stack deploy.

My preferred practice is to use docker-compose files to specify the behavior how containers should be started. The benefit of these files is that you can both use Docker Compose and Docker Swarm (stack). Docker images are created from separate Dockerfiles.

The production containers could be different from the containers you use in your local environment. For example, you do not want Composer or XDebug loaded in your production containers but you do want them in your local environment. Therefore, it is best to specify these images and containers in different files. The example below contains the configuration for a working NGINX, PHP-FPM and MySQL stack for a local environment. For the production images, these files can be similar but contain fewer packages (e.g. no Git and Composer for PHP-FPM) and different environment variables for the production environment.

Example NGINX – PHP-FPM setup

Docker-compose.yml (local development):

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 | version: "3" services: fpm: build: # Info to build the Docker image context: . # Specify where the Dockerfile is located (e.g. in the root directory of the project) dockerfile: Dockerfile-php # Specify the name of the Dockerfile environment: # You can use this section to set environment variables. But you can also use the .env file. - DATABASE_URL=mysql://root:root@db:3306/project_db # Connection string for the database. volumes: - ./:/var/www/project/ # Location of the project for php-fpm. Note this should be the same for NGINX. networks: - symfony # Docker containers (services) that need to connect to each other should be on the same network. nginx: build: context: . dockerfile: Dockerfile-nginx volumes: - ./:/var/www/project/ ports: - 8001:80 # Ports that are exposed, you can connect to port 8001 to port 80 of the container. networks: - symfony db: image: mysql:5.6 environment: - MYSQL_ROOT_PASSWORD=root # Setting the MYSQL credentials to root:root. volumes: - symfony_db:/var/lib/mysql:cached # Persist the database in a Docker volume. ports: - 3311:3306 networks: - symfony volumes: symfony_db: networks: symfony: |

Dockerfile-php (the soap extension was needed for a specific application):

1 2 3 4 5 6 7 8 9 10 11 12 | FROM php:fpm RUN apt-get update && apt-get install -y --no-install-recommends \ git \ zlib1g-dev \ libxml2-dev \ && docker-php-ext-install \ pdo_mysql \ soap \ zip RUN curl -sS https://getcomposer.org/installer | php && mv composer.phar /usr/local/bin/composer COPY . /var/www/project WORKDIR /var/www/project/ |

Dockerfile-nginx:

1 2 3 | FROM nginx:latest COPY build/nginx/default.conf /etc/nginx/conf.d/ COPY . /var/www/project |

build/nginx/default.conf:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 | server { listen 80; root /var/www/project/public; location / { try_files $uri /index.php$is_args$args; } location ~ ^/index\.php(/|$) { # Connect to the Docker service using fpm fastcgi_pass fpm:9000; fastcgi_split_path_info ^(.+\.php)(/.*)$; include fastcgi_params; fastcgi_param SCRIPT_FILENAME $realpath_root$fastcgi_script_name; fastcgi_param DOCUMENT_ROOT $realpath_root; internal; } location ~ \.php$ { return 404; } error_log /dev/stdout info; access_log /var/log/nginx/project_access.log; } |

Dockerfile-mysql:

1 | FROM mysql:5.6 |

These files in combination with your Symfony project should run a working NGINX/PHP-FPM setup when installing Docker and using: docker-compose up -d.

Discussion

I hope more Symfony developers will use Docker for their projects. Hopefully, in the future, there will be an official Docker image for Symfony which we can use as the base image for our projects. For example, both WordPress and Drupal have official images. Symfony has a lot of contributed images but these can be insecure and are often not maintained. Do you think Symfony should embrace the Docker ecosystem?

8 Comments

revollat · June 12, 2018 at 7:35 am

Hi I have an article on similar subject https://medium.com/@olivier.revollat/quick-symfony-4-sandbox-with-docker-87017edfe24e

nielsvandermolen · June 12, 2018 at 11:35 am

The next article is out where we use a related Docker setup to build an API application with Symonfy 4:

https://www.nielsvandermolen.com/symfony-4-api-platform-application/

A GitHub repository is included.

Carles · June 22, 2018 at 11:32 am

“Do you think Symfony should embrace the Docker ecosystem?” I think that not. Symfony devs should maintain the platform. Their users should take care if they use the platform with Docker, a Virtualbox image or directly in apache / nginx server. What’s more, if you use Symfony with Docker or directly with the web server, the main difference is the environment where the app runs, rather than configuration. I have some Symfony apps in production directly served by Nginx, and I don’t see much difference in terms of configuration with your example. I have more or less the same nginx configuration, the main difference is that I use subdomains instead of simple a “listen all” directive.

Anyway, what’s true is that you can have an isolated environment with Docker, so you can update containers without affecting the other ones. In my company’s situation, if for example I upgrade php version it affects all projects at the same time.

nielsvandermolen · June 22, 2018 at 1:39 pm

Thanks for your comment, Carles. I agree that the user should decide what method or platform they want to use. However, I do not see a reason not to include Docker in these options officially.

Currently, with the built-in PHP web server, Symfony focusses mainly on the PHP world, and you should not use that on production. With the support of official Docker images, you open up Symfony to people that might never have used PHP. For example, this blog runs on the official Docker image of WordPress, and it required no PHP or WordPress knowledge to get it running. Furthermore, I am not the only one that uses Docker images this way. The WordPress, Drupal and Django images all have over 10M pulls each.

Further advantages of having an official repo are that you quickly see the supported versions of PHP and Symfony and security fixes can spread quickly to a broad audience. Now, we have a lot of wasted time, because everyone that wants to run Symfony on Docker has to reinvent the wheel and look at how they can set up their images. Proof of this is the 1245 public repositories on the Docker Hub that contain Symfony.

https://docs.docker.com/docker-hub/official_repos/

https://github.com/docker-library/official-images/tree/master/library

Docker Exe · May 16, 2019 at 8:43 pm

There are many reasons NOT to use docker – e.g. you did not mention the word “security” in your blog post – this alone is a red flag and might be a hint that you are not really qualified to educate anybody about deployment of PHP apps via Docker.

You must understand that Docker is NOT for “quick deployments” – you will just pull in a lot of more complexity into your deployment process. You have to understand what you are doing – using Docker for “quick deployment” is a cancer mindset that keeps people stupid, similar to Windows “just click that exe” – it seems to work, but you know nothing about what really happens behind the scenes – this is not the way to go with real life server deployments! If you want that, better use AWS or some other PHP service provider.

If you want to deploy via docker – what seems a very strange deployment strategy for a simple PHP app, as PHP is “microservice on every request” anyway – you take responsibility for all the images used and need to understand all security details of a Docker setup – I guess that is not the case. Instead you seem to think of Docker as a “quick fix” – the result is that you are now running some blackbox software on your servers that you have no clue about what to look for and how to fix problems. Bad! There are good chances are, that you will be hacked sooner or later with such a setup.

Docker is not a “quick and dirty deployment tool” as you are using it here. There are some reasons to use Docker, but certainly not with PHP.

I would suggest removing that post, as thousands of not-so-well educated PHP developers will follow your path and again we will have lots of security problems for the next ten years because of PHP developers not understanding what they are doing. Please stop that! Thanks!

nielsvandermolen · May 17, 2019 at 11:47 am

It seems you don’t understand the security implications of using Docker. Imagine a common use case of having a server with multiple PHP applications. If one application gets hacked in a common LAMP stack it has at least read access to all other applications. If one Docker container is breached is only has access to a single application. A Docker setup is by default very secure:

https://docs.docker.com/engine/security/security/

There is indeed added complexity in setting up Docker which I discuss partly in this article:

https://www.nielsvandermolen.com/continuous-integration-jenkins-docker/

There are additional security issues in Docker. One is the trust of the images you use. Partly, for this, I make the case that Symfony needs an official image (which are reviewed and can be as secure as Symonfy itself). All the best security practices for Symfony can be applied in the image making all application more secure than the current situation where everybody creates there own images.

I think you are projecting because I never make the case that Docker is quick and that you should not be careful when switching over to new technologies on your development servers. I make the case that Docker is better: better composer builds, better CI workflows, better security, better cooperation on ops, for many applications and that we need official support for it in Symfony!

Most applications we create today are not simple anymore. For example, check out the Messenger example where we need 5 separate container images (including RabbitMQ).

https://github.com/nielsvandermolen/example-symfony-messenger/blob/master/docker-compose.yml

Or check the docker-compose file of API Platform where there is support for Mercure, Varnish, React (Admin Component).

https://github.com/api-platform/api-platform/blob/master/docker-compose.yml

There are certainly good reasons to use Docker for PHP applications and I will not remove the post!

Olga · May 21, 2019 at 11:33 am

One does not pull random images on dev machines that carry code that goes into production. Of course in a secure dev environment you will not even have access to the famous “Docker Registry of Backdoored Images”.

Why are you promoting insecure setups, like Dockerfiles from “somewhere from the internet” and containers that run as root? Why not teach people that they have to build their images themselves with all the small but important details if they want security? Networking? File access? Logging?

Ah, suddenly it is not so easy anymore and one realizes that Docker delivers an extreme overhead that is only “convenience on one click” if you accept Docker as a blackbox, but that is a mindset that is pure anti-security and when you look deeper into Docker, you will see how rotten everything is.

We all got a very clear message with the latest Docker security issues – exploits in the most downloaded images, yes, fine! Understand that! Take a seat, relax, try to get rid of emotions and illusions about “infra power at my fingertips” and look at it with a cold, rational mind.

Docker is never used outside of virtual machines in real life – why do you think that happens? Because all Docker containers share the same kernel – one exploit gets you all the containers.

Docker is like “WordPress for infra”, but out of the box it is much worse, like one WordPress installation on a shared server configured with the same PHP user for all domains.

Why are many younger devs, especially PHP and JavaScript guys so stubbornly refusing to adapt wisdom collected by generations of programmers before?

Pulling more code in will never give you more security, a very obvious thing.

Keep as much code and tools as possible out of production.

Yes, you want automated deployments, and this is the key to reproducible infrastructure, but keep Docker out.

And do not even start with Kubernetes – an energy-wasting CPU hog that will lead to much higher energy consumption in all data centers – a crazy, irresponsible choice, but the K8S-train is already steaming through the industry and years of kernel optimization go out the window, in total denial of global climate trends – hipsters blindly destroying the human race!

nielsvandermolen · May 21, 2019 at 11:58 am

I promote the use of “official images” and that Symfony gets an “official” image. Nginx and PHP have official images which are used in the Dockerfiles in this article.

https://docs.docker.com/docker-hub/official_images/

Exploits are everywhere (anyone remembers Heartbleed?). The key is to be able to quickly update and deploy the fixes before they can be exploited. How fast are you able to update all your applications in a big monolithic infrastructure? Do you even know all the vulnerabilities in your code? How much-automated vulnerability scanning did you implement in your infrastructure?

I use a root user as the MySQL user as an example. But the PHP container uses the official PHP image thus the user is www-data. Do you execute all the best practices for your PHP applications in your automated infrastructure like in this Dockerfile?

https://github.com/docker-library/php/blob/85b2af63546309c3c7b895524db10ef02aa4edba/7.3/stretch/fpm/Dockerfile